The use of Graphics Processing Units for rendering is well known, but their power for general parallel computation has only recently been explored. Parallel algorithms running on GPUs can often achieve up to 100x speedup over similar CPU algorithms, with many existing applications for physics simulations, signal processing, financial modeling, neural networks, and countless other fields.

This course will cover programming techniques for the GPU. The course will introduce NVIDIA’s parallel computing language, CUDA. Beyond covering the CUDA programming model and syntax, the course will also discuss GPU architecture, high performance computing on GPUs, parallel algorithms, CUDA libraries, and applications of GPU computing.

Problem sets will cover performance optimization and specific GPU applications such as numerical mathematics, medical imaging, finance, and other fields.

Lecture 1

GPU Computing: Step by Step

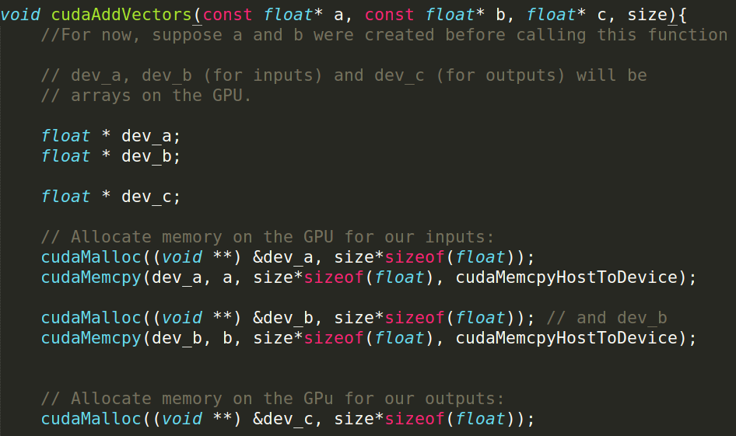

- Setup inputs on the host (CPU-accessible memory)

- Allocate memory for outputs on the host

- Allocate memory for inputs on the GPU

- Allocate memory for outputs on the GPU

- Copy inputs from host to GPU

- Start GPU kernel (function that executed on gpu)

- Copy output from GPU to host

NOTE: Copying can be asynchronous, and unified memory management is available

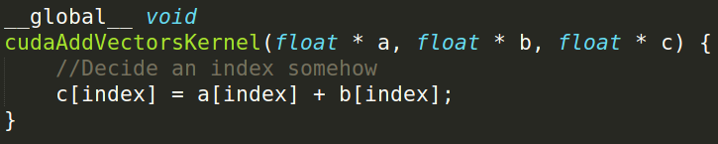

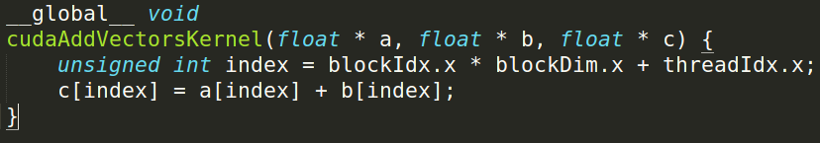

The Kernels

- Our “parallel” function

- Given to each thread

Simple implementation:

Indexing

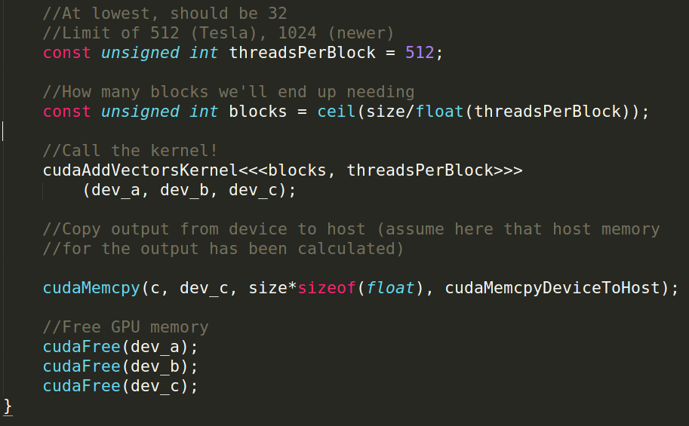

calling the kernel

Lecture 2 Intro to the simd lifestyle and GPU internals

Can use GPU to solve highly parallelizable problems. Looked at the a[] + b[] -> c[] example

CUDA is a straightforward extension to C++:

- Separate CUDA code into

.cuand.cuhfiles - We compile with

nvcc, NVIDIA’s compiler for CUDA, to create object files (.o files)

NVCC and g++

CUDA is simply an extension of other bits of code you write!!!!

- Evident in .cu/.cuh vs .cpp/.hpp distinction

- .cu/.cuh is compiled by nvcc to produce a .o file

Since CUDA 7.0 / 9.0 there’s support by NVCC for most C++11 / C++14 language features, but make sure to read restrictions for device code

https://docs.nvidia.com/cuda/cuda-c-programming-guide/index.html#c-cplusplus-language-support

- .cpp/.hpp is compiled by g++ and the .o file from the CUDA code is simply linked in using a “#include xxx.cuh” call. (No different from how you link in .o files from normal C++ code)

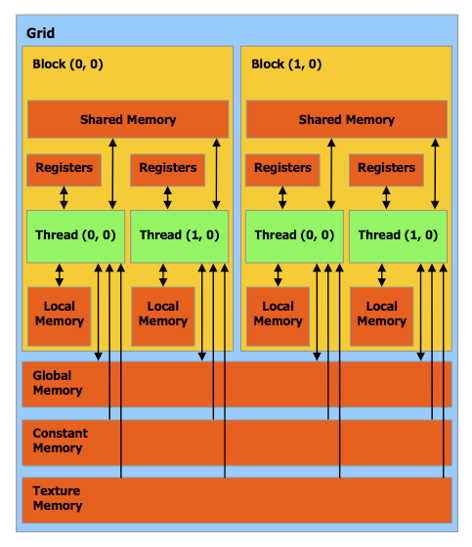

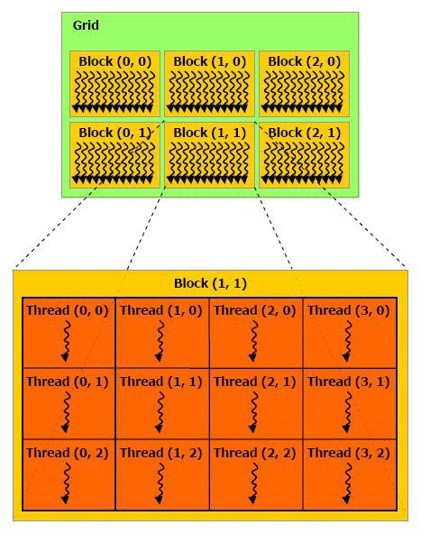

Thread Block Organization

Keywords you MUST know to code in CUDA:

- Thread - Distributed by the CUDA runtime (threadIdx)

- Block - A user defined group of 1 to ~512 threads (blockIdx)

- Grid - A group of one or more blocks. A grid is created for each CUDA kernel function called.

Imagine thread organization as an array of thread indices

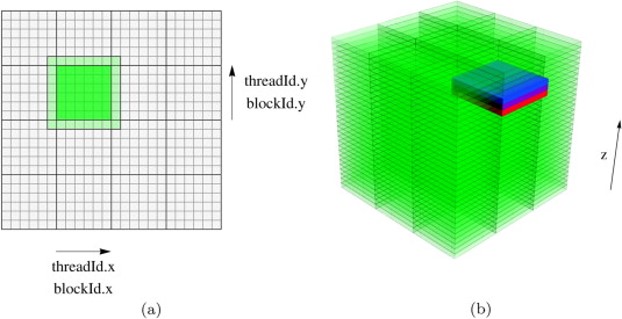

For many parallelizeable problems involving arrays, it’s useful to think of multidimensional arrays.

- E.g. linear algebra, physical modelling, etc, where we want to assign unique thread indices over a multidimensional object

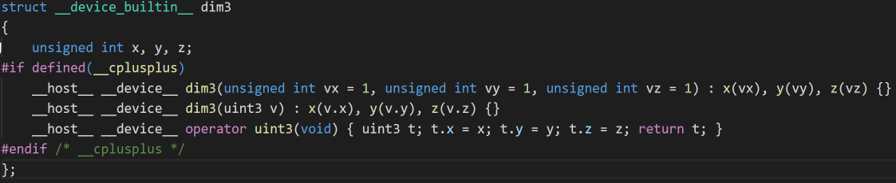

- So, CUDA provides built in multidimensional thread indexing capabilities with a struct called dim3!

Dims

dim3 is a struct (defined in vector_types.h) to define your Grid and Block dimensions.

Works for dimensions 1, 2, and 3:

- dim3 grid(256); // defines a grid of 256 x 1 x 1 blocks

- dim3 block(512, 512); // defines a block of 512 x 512 x 1 threads

- foo<<<grid, block>>>(…);

Grid/Block/Thread Visualized

Single Instruction, Multiple Data (SIMD)

- SIMD describes a class of instructions which perform the same operation on multiple registers simultaneously.

- Example: Add some scalar to 3 registers, storing the output for each addition in those registers. Used to increase the brightness of a pixel

- CPUs also have SIMD instructions and are very important for applications that need to do a lot of number crunching. Video codecs like x264/x265 make extensive use of SIMD instructions to speed up video encoding and decoding.

SIMD continued

Converting an algorithm to use SIMD is usually called “Vectorizing”

- Not every algorithm can benefit from this or even be vectorized at all, e.x. Parsing.

- Using SIMD instructions is not always beneficial though. 1) Even using the SIMD hardware requires additional power, and thus waste heat. 2) If the gains are small it probably isn’t worth the additional complexity.

- Optimizing compilers like GCC and LLVM are still being trained to be able to vectorize code usefully, though there has been many exciting developments on this front in the last 2 years and is an active area of study. https://polly.llvm.org/

SIMT (Single Instruction, Multiple Thread) Architecture

A looser extension of SIMD which is what CUDA’s computational model uses. Key differences:

- Single instruction, multiple register sets

Wastes some registers, but mostly necessary for following two points - Single instruction, multiple addresses (i.e. parallel memory access!)

Memory access conflicts! Will discuss next week. - Single instruction, multiple flow paths (i.e. if statements are allowed!!!)

Introduces slowdowns, called ‘warp-divergence.’

Good description of differences

https://yosefk.com/blog/simd-simt-smt-parallelism-in-nvidia-gpus.html

https://docs.nvidia.com/cuda/cuda-c-programming-guide/index.html#hardware-implementation

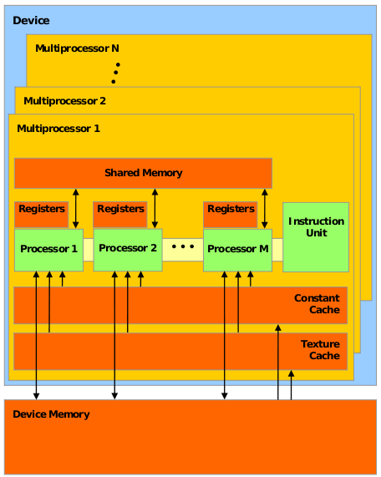

Important CUDA Hardware Keywords

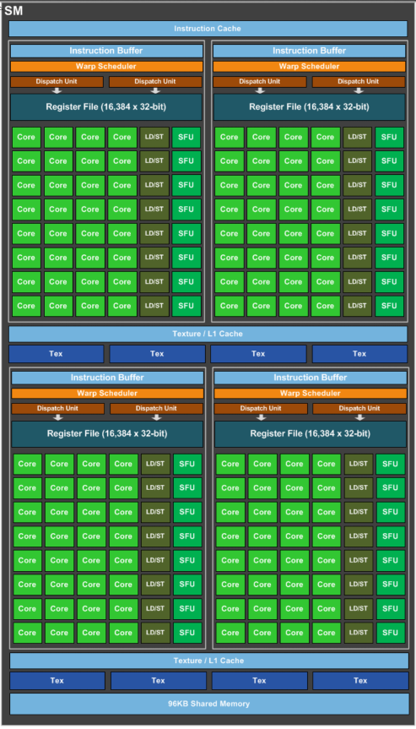

- Streaming Multiprocessor (SM), Each contains (usually) 128 single precision CUDA cores (which execute a thread) and their associated cache. This is a standard based on your machines Compute Capability.

- Warp – A unit of up to 32 threads (all within the same block). Each SM creates and manages multiple warps via the block abstraction. Assigns to each warp a Warp Scheduler to schedule the execution of instructions in each warp.

- Warp Divergence. A condition where threads within a warp need to execute different instructions in order to continue executing their kernel.

1)In order to maintain multiple flow path per instruction, threads in different ‘execution branches’ during an instruction are given no-ops.

2) Causes threads to execute sequentially, in most cases ruining parallel performance.

3) As of the Kepler (2012) architecture each Warp can have at most 2 branches, starting with Volta (2017) this condition has been nearly eliminated. For this class assume your code must only branch at most twice as we are not yet allocating Volta GPUs to this class. Inpendent Thread Scheduling fixes this problem by maintianing an execution state per thread. See Compute Capability 7.x

What a modern GPU looks like

inside a GPU

Think of Device Memory (we will also refer to it as Global Memory) as a RAM for your GPU

- Faster than getting memory from the actual RAM but still have other options

- Will come back to this in future lectures

GPUs have many Streaming Multiprocessors (SMs)

- Each SM has multiple processors but only one instruction unit (each thread shares program counter)

- Groups of processors must run the exact same set of instructions at any given time with in a single SM

When a kernel (the thing you define in .cu files) is called, the task is divided up into threads

- Each thread handles a small portion of the given task

The threads are divided into a Grid of Blocks. Both Grids and Blocks are 3 dimensional

e.g.

1 | dim3 dimBlock(8, 8, 8); |

However, we’ll often only work with 1 dimensional grids and blocks

e.g.

1 | Kernel<<<block_count, block_size>>>(…); |

Maximum number of threads per block count is usually 512 or 1024 depending on the machine

Maximum number of blocks per grid is usually 65535.

- If you go over either of these numbers your GPU will just give up or output garbage data

- Much of GPU programming is dealing with this kind of hardware limitations! Get used to it

- This limitation also means that your Kernel must compensate for the fact that you may not have enough threads to individually allocate to your data points.

Each block is assigned to an SM

Inside the SM, the block is divided into Warps of threads

Warpsconsist of 32 threads. CUDA defined constant in cuda_runtime.h- All 32 threads MUST run the exact same set of instructions at the same time. Due to the fact that there is only one instruction unit

- Warps are run concurrently in an SM

- If your Kernel tries to have threads do different things in a single warp (using if statements for example), the two tasks will be run sequentially. Called

Warp Divergence(NOT GOOD)

In Fermi Architecture (i.e. GPUs with Compute Capability 2.x), each SM has 32 cores, later architectures have more.

e.g. GTX 400, 500 series

32 cores is not what makes each warp have 32 threads. Previous architecture also had 32 threads per warp but had less than 32 cores per SM

Some early Pascal (2016) GPUs (GP100) had 64 cores per SM, but later chips in that generation (GP104) had 128 core model.

Turing (2018) maintains 128 core standard

Shown here is a Pascal GP104 GPU Streaming Multiprocessor that can be found in a GTX1080 graphics card.

The exact amount of Cache and Shared Memory differ between GPU models, and even more so between different architectures.

Whitepapers with exact information can be gotten from Nvidia (use Google)

https://international.download.nvidia.com/geforce-com/international/pdfs/GeForce_GTX_1080_Whitepaper_FINAL.pdf

http://www.nvidia.com/content/PDF/product-specifications/GeForce_GTX_680_Whitepaper_FINAL.pdf